I Think You'll Find It's a Bit More Complicated Than That (20 page)

Read I Think You'll Find It's a Bit More Complicated Than That Online

Authors: Ben Goldacre

I contacted the Home Office again. ‘The figure is over sixty and it comes from the number of disclosures made where there was a conviction of a sexual offence with a minor or violence against a minor. In total twenty-one disclosures were made specifically about registered sex offenders (RSO), a further eleven disclosures were made, for example relating to convictions for violent offending. These people had access to over sixty children.’

I’m not sure this is self-evident. The academics who wrote the report couldn’t work out where the number sixty came from, and at least two pieces have appeared trying to unpick it, each coming up with different answers from me and the Home Office. The excellently named

Conrad Quilty-Harper in the

Telegraph

and a promising new website called

FullFact

, both – very reasonably – tried adding various categories of numbers from the academic report, including a figure on social-worker activity which seemed to make up the numbers.

I’m not saying the figure sixty is wrong. While what it represents was probably overstated – by the Home Office and the press reports – the number itself isn’t absurd. But it does seem odd that just finding out where it came from involved so much mucking about, and it seems even odder to ignore the robust figures in a long academic report that you’ve commissioned (the scheme wasn’t cheap compared to, say, social-worker salaries), and instead build your press activity around one opaque figure constructed, ever so slightly, somewhere, it seems, on the back of an envelope.

Guardian

, 6 June 2009

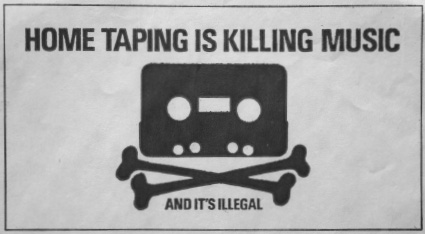

You are killing our creative industries. ‘Downloading

Costs Billions

’, said the

Sun

. ‘MORE than seven million Brits use illegal downloading sites that cost the economy billions of pounds, Government advisors said today. Researchers found more than a million people using a download site in ONE day and estimated that in a year they would use £120 billion worth of material.’

That’s about a tenth of our GDP. No wonder the

Daily Mail

was worried

too: ‘The network had 1.3 million users sharing files online at midday on a weekday. If each of those downloaded just one file per day, this would amount to 4.73 billion items being consumed for free every year.’

Now, I am always suspicious of anything on piracy from the music industry, because it has produced a lot of dodgy figures over the years. I also doubt that every download is lost revenue, since, for example, people who download more music

also buy more music

. I’d like more details.

So where do these notions of so many billions in lost revenue come from? I found

the original report

. It was written by some academics you can hire in a unit at UCL

called CIBER

, the Centre for Information Behaviour and the Evaluation of Research (which ‘seeks to inform by countering idle speculation and uninformed opinion with the facts’). The report was commissioned by a government body

called SABIP

, the Strategic Advisory Board for Intellectual Property Policy.

On the billions lost it says: ‘Estimates as to the overall lost revenues if we include all creative industries whose products can be copied digitally, or counterfeited, reach £10 billion (IP Rights, 2004), conservatively, as our figure is from 2004, and a loss of 4,000 jobs.’

What is the origin of this conservative figure? I hunted down the

full CIBER documents

, found the references section, and followed the web link, which led to a

2004 press release

from a private legal firm called Rouse which specialises in intellectual property law. This press release was not about the £10 billion figure. It was, in fact, a one-page document which simply welcomed the government setting up an intellectual property theft strategy. In a short section headed ‘Background’, among five other points, it says: ‘Rights owners have estimated that last year alone counterfeiting and piracy cost the UK economy £10 billion and 4,000 jobs.’ So this authoritative government figure, from an academic study, in fact comes from an industry estimate, made as an aside, five years earlier, in a short press release from one law firm.

But what about all those other figures in the media coverage? Lots of it revolved around the figure of 4.73 billion items downloaded each year, worth £120 billion. This means each downloaded item – software, movie, mp3, ebook – is worth £25 on average. Now, before we go anywhere, this seems very high. I am not an economist, and I don’t know about their methods, but to me, an appropriate comparator for someone who downloads a film to watch it once might be the rental value, for example, not the sale value. I’d also like to suggest that sometimes, perhaps quite often, someone who downloads a £1,000 professional 3D animation software package to fiddle about with at home may not use it more than three times.

In any case, that’s £175 a week, or £9,100 a year, potentially not being spent by millions of people. Is this really lost revenue for the economy, as reported in the press? Plenty of those downloading will have been schoolkids, or students, who may not have £9,100 a year to spend. Even if they weren’t, that figure is still about a third of the average UK wage. Before tax. Oh, and the government’s figures were wrong: it was actually 473 million items, and £12 billion, so the value of each downloaded item was still £25, but it exaggerated the amount of money ‘lost’ by a factor of ten, in the original executive summary, and in the press release. These were changed quietly after the errors were pointed out

by a BBC journalist

, but I can find no public correction for the many people who were misled.

So I asked SABIP what steps they took to notify journalists of their error, which resulted in their absurdly huge claims being widely reported in news outlets around the world. They refused to answer my questions in emails; insisted on a phone call (always a warning sign); told me that they had taken steps, but wouldn’t say what; explained something about how they couldn’t be held responsible for lazy journalism; then, bizarrely, after ten minutes, tried to tell me retrospectively that the whole call was actually off the record, that I wasn’t allowed to use the information in my piece, but that they had answered my questions, and so they didn’t need to answer on the record, but I wasn’t allowed to use the answers, and I couldn’t say they hadn’t answered, I just couldn’t say what the answers were. Then the PR man from SABIP demanded that I acknowledge, in our phone call, formally, for reasons I still don’t fully understand, that he had been helpful.

I think it’s OK to be confused and disappointed by this. Like I said: as far as I’m concerned, everything from this industry is false, until proven otherwise.

Guardian

, 18 July 2009

We’d all like to help the police do their job well. They, in turn, would like to have a massive database with DNA profiles of everyone who has been arrested, but not convicted of a crime. We worry that this is intrusive, but some of us are willing to make concessions – on our principles and the invasion of our privacy – in the name of preventing crimes. To do this, we’d like to know the evidence on whether this database is helpful, to help us make an informed decision.

Luckily, the Home Office has now

published a consultation paper

on the subject. It defends the database by arguing that innocent people who have been arrested and released are basically criminals anyway, and go on to commit crimes in the future as much as guilty people do. ‘This,’ it says, ‘is obviously a controversial assertion.’ There is no reason for this assertion to be controversial: it’s a simple factual matter, and if it’s true, then you could easily assemble some good-quality evidence to prove it.

The Home Office has assembled some evidence. This study, from the Jill Dando Institute, attached to the consultation paper as an appendix, is possibly the most unclear and badly presented piece of research I have ever seen in a professional environment.

They want to show that the level of criminal activity in a group of people who have been arrested, but against whom no further action has been taken, is the same as the level of criminal activity in people who have been arrested and convicted of a crime, or who have accepted a caution.

On page 30 they explain their methods, haphazardly, scattered about in the text. They describe some people ‘sampled on 1st June 2004, 1st June 2005 and 1st June 2006’. These dates are never mentioned again. I have no idea what their plan was there. They then leap to talking about Table 2. This contains data on people, each from a ‘sample’ in 1996, 1995 and 1994, followed up for thirty months, forty-two months and fifty-four months respectively. Are these anything to do with the people from 2004, 2005 and 2006? I have no idea, and it is impossible to tell.

In fact, I have no idea what ‘sample’ means – perhaps that was the date on which they were first arrested. I don’t know why they were only followed up for thirty, forty-two and fifty-four months, instead of all the way from the 1990s to 2009. Crucially, I also don’t know what the numbers in the table mean, because that isn’t properly explained. I think it’s the number of people from the original group who have subsequently been arrested again, but there’s no way to tell.

Then they start to discuss the results from this table. They say that these figures show that arrested non-convicted people are the same as convicted people. There are no statistics conducted on these figures, so there is absolutely no indication of how wide the error margins are, and whether these are chance findings. To give you a hint about the impact of noise on their data, more people are described as having been subsequently re-arrested over the forty-two-month follow-up period than over the fifty-four-month follow-up period, which seems surprising, given that the people in the fifty-four-month group had a much longer period of time in which to get arrested.

This is before we even get on to the other problems. At a few hundred people, this study seems pretty small for one that is supposed to give compelling evidence that there is

no

difference between two groups, because to prove a negative like this, you generally need a very large sample, to minimise the chance of missing a true difference in the noise.

There is no evidence that they have done a ‘power calculation’ to determine the sample size they’d need, and in any case, their comparison group feels a bit rigged to me. In their ‘convicted’ sample they only count people who had a non-custodial sentence, and exclude people who got a custodial sentence, on the grounds that those people would be incapable of committing a crime during their incarceration. This also has the effect, however, of making the ‘criminal’ group really not very criminal, and so consequently a bit more likely to be similar to innocent people.

I could go on. Table 1 is so thoroughly ‘not as described’ as to be uninterpretable. In the text they talk about different cells on the table which are ‘solid red’, ‘stippled yellow’, and ‘blank’, when in fact the whole thing is just blue.

This research is incomprehensible and unreadable. Anybody who claims to have been persuaded by the data quoted here is telling you, loudly and clearly in the subtitles, that they don’t need to understand – or possibly even read – a piece of research in order to find it compelling. People like this are not to be trusted, and if research of this calibre is what guides our policy on huge intrusions into the personal privacy of millions of innocent people, then they might as well be channelling spirits.

I’d Expect This

from UKIP, or the

Daily Mail

. Not from a Government Leaflet

Guardian

, 15 April 2011

The government has issued a new leaflet aiming to justify the latest round of redisorganisation in the NHS. This leaflet is called ‘Working Together

For A Stronger NHS

’. It was produced by Number 10, it appears on the Department of Health website, and many of the figures it contains are misleading, out of date, or factually incorrect.

The leaflet begins, like much pseudoscience, with some uncontroversial truths: the number of people aged over eighty-five will double, and the cost of drugs is rising. This is all true.

Then comes the trouble. In large letters, alone on one entire page, you see: ‘If the NHS was performing at truly world-class levels we would save an extra 5,000 lives from cancer every year.’ The reference for this is a

paper in the

British Journal of Cancer

called ‘What if Cancer Survival in Britain were the Same as in Europe: How Many Deaths are Avoidable?’

This study does not aim to predict the future: in fact, it looks at data from 1985 to 1999 – seriously – which is a very long time ago. It finds that if we’d had the same cancer survival rates – more than twenty years ago, in the eighties and nineties – as the average in the EU, then we’d have had 7,000 fewer deaths per year. Not 5,000 fewer. To put the big number in context, by this study’s calculation 6–7 per cent of UK cancer deaths in the 1990s were avoidable. Since then we’ve seen the massive 2000 NHS Cancer Plan, a new decade, and a new century. This paper says nothing about the number of lives we ‘would save’ each year by 2011, and citing it in that context is entirely misleading.