The Computers of Star Trek (4 page)

Read The Computers of Star Trek Online

Authors: Lois H. Gresh

It won't be all that long before invisible computers sense our presence in a room, cook our food, start our cars, do our laundry, design our clothing, and make it for us. Computers may even detect our emotional states and automatically know how to help us relax after a grueling day at work.

Our primary means of communicating with these computers will be the same one we use with each other: speech. By analyzing

frequency and sound intensities, today's voice recognition software can recognize more than forty thousand English words. It does this by differentiating one phoneme from another.

c

However, to understand what someone is saying (as opposed to simply recognizing that someone has uttered the phoneme p rather than

f

), the software must be artificially intelligent. It's one thing for voice-recognition software to interpret a spoken command such as “Save file” or “Call Dr. Green's office.” It's quite another for software to understand “What are the chances that Picard is still a human inside Locutus?” Phonemes alone don't suffice. Thus we assume the main computer system must be artificially intelligent. But this function is never performed by the LCARS on

Star Trek

.

frequency and sound intensities, today's voice recognition software can recognize more than forty thousand English words. It does this by differentiating one phoneme from another.

c

However, to understand what someone is saying (as opposed to simply recognizing that someone has uttered the phoneme p rather than

f

), the software must be artificially intelligent. It's one thing for voice-recognition software to interpret a spoken command such as “Save file” or “Call Dr. Green's office.” It's quite another for software to understand “What are the chances that Picard is still a human inside Locutus?” Phonemes alone don't suffice. Thus we assume the main computer system must be artificially intelligent. But this function is never performed by the LCARS on

Star Trek

.

Many prominent researchers think that tomorrow's computers will understand not only our voices but also our body language. Already, enormous research has been done in building computers that see and interpret our facial expressions. Since 1975, the Facial Action Coding System (FACS) has been used to create facial animations that portray human emotions. These systems interpret our expressions as belonging to a limited set of emotional states and respond in programmed ways. If the imaging software detects us smiling, for instance, the computer may play some of our favorite rock and roll. If it “sees” that we're nervous or impatient, it will forego the music and speed up its response time instead.

When it comes to facial recognition software, the LCARS is way behind today. And as for what's coming, the LCARS doesn't come close.

Here's a glimpse at where we think technology is heading. A doctor (who will be more like a bioengineer with a good bedside

manner) injects micro or nanochips beneath your skin. McCoy and Crusher do this sort of thing with crewmembers all the time. In the real future, however, the computer chips inside your body will communicate wirelessly as a distributed network.

manner) injects micro or nanochips beneath your skin. McCoy and Crusher do this sort of thing with crewmembers all the time. In the real future, however, the computer chips inside your body will communicate wirelessly as a distributed network.

Sprain a muscle, and the nervous system tells your brain to feel pain. Touch something hot, and the nervous system tells your brain that your fingers are burning. Hear something loud, and the nervous system tells your brain that your ears hurt. In the future, the computer chips inside your body will detect such neurotransmissions as well as many other physical symptomsâfor example, heart rate and cholesterol levelsâand possibly release chemical antidotes. To state it simply, your body will be a network of microprocessors.

There's nothing to stop you from linking your body network into the future's version of the Internet, where everyone else's body is also linked. You can turn on the music chip in your toe, think “Bach's Fantasia in G Major” and hear it. If there's a new recording of it that you've just learned about, you can retrieve that rendition from across the globe, hear it, and never even activate your own music chip. You can transmit a work assignment to your boss by touching his hand (or kissing his *feet... or blowing it to him). In fact, all you will need to do is sit and think, and your body network will do the rest.

This seems more like our future than Worf typing commands on a keyboard and staring at a computer screen. The LCARS seems more like a dumb terminal than an artificially intelligent workstation. Besides, the LCARS will be unnecessary, even as an intelligent front end to the ship's computer. At minimum, the main

Enterprise

computerâif indeed such a thing exists, which is unlikelyâwill sense Worf's presence on the bridge simply because his body network identifies him.

Enterprise

computerâif indeed such a thing exists, which is unlikelyâwill sense Worf's presence on the bridge simply because his body network identifies him.

In addition, it's probably evident to you by now that Worf won't need to issue voice commands, either. He'll think, “Where is

Picard?” his body network will link to all the other body networks on the ship, and instantly, Picard's body network will respond.

Picard?” his body network will link to all the other body networks on the ship, and instantly, Picard's body network will respond.

If you think about where technology's heading, this makes perfect sense. Within a decade or two, we'll wave some fingers to indicate what we want our computers to do. The computer's sensors will visually identify our hand motions. Today's computers, even simple robots made with Lego

d

toys, use sensors to see.

d

toys, use sensors to see.

Let's assume the following as givens: (1) the ship's computer recognizes and interprets the body networksâand the body languageâof each individual, (2) the ship's computer includes wireless networks of individual processors, (3) these processors communicate with each other, with the “main” computer, and with every crewmember. It's our guess that possibly, in three or four hundred years, human speech may be unnecessary in many contexts.

In fact, if we make three more entirely plausible assumptionsâthat all the ship's instrumentation is controlled through virtual-reality simulations; that people interact with the computer strictly by gestures and whispered commands; and that personal communicators consist of subcutaneous implants in the crew's throats and earsâthen we can imagine a truly bizarre scene. A person watching the bridge crew operate the ship would see only a group of people sitting in a bare room, apparently muttering to themselves while making random hand motions. This may indeed be the starship of the future, but it's lousy TV. That's why we need those keyboards, screen displays, and clearly spoken conversations. We twentieth-century viewers need visuals that we can instantly understand. To our descendants, the difference between

talking to a person and talking to a computer may be a distinction hardly worth noticing; but to us, it's very important indeed.

talking to a person and talking to a computer may be a distinction hardly worth noticing; but to us, it's very important indeed.

While we're talking about the LCARS, let's pause to think about Worf's communicator badge. If Worf isn't near an LCARS console, he may tap his badge and ask the computer to locate Captain Picard. How likely is this scenario?

It's predicted that within a few years, workers will wear tiny communicators equipped with infrared transmitters. These modern-day communicators will have the power of desktop PC's. They'll function like those in

Star Trek

, but they'll be even smaller. Prototypes have already been built and tested.

Star Trek

, but they'll be even smaller. Prototypes have already been built and tested.

Today's communicators, as on

Star Trek

, let main-computer systems know where everyone is located. This is how lights turn on when Picard enters his quarters and how doors magically slide open for Captain Kirk. The future is now.

Star Trek

, let main-computer systems know where everyone is located. This is how lights turn on when Picard enters his quarters and how doors magically slide open for Captain Kirk. The future is now.

Why do crewmembers need to tap their badges to open a channel? Why not just issue a voice command to activate a communicator embedded beneath the skin of your throat? In the

Star Trek

future, a communicator may be so tiny that it'll be invisible and injected by a hypospray beneath the skin.

Star Trek

future, a communicator may be so tiny that it'll be invisible and injected by a hypospray beneath the skin.

In

The Next Generation

episode “Legacy,” Data comments that he and Geordi can use a sensing device that “monitors bioelectric signatures of the crew in the event they get separated from the [escape] pod.” This implies that, in the

Trek

universe, ordinary badge-tapping communicators are unnecessary. Even in the original series (“Patterns of Force”), Kirk instructs McCoy to “prepare a subcutaneous transponder in the event we can't use our communicators.” McCoy then uses a hypospray to inject the transponder.

The Next Generation

episode “Legacy,” Data comments that he and Geordi can use a sensing device that “monitors bioelectric signatures of the crew in the event they get separated from the [escape] pod.” This implies that, in the

Trek

universe, ordinary badge-tapping communicators are unnecessary. Even in the original series (“Patterns of Force”), Kirk instructs McCoy to “prepare a subcutaneous transponder in the event we can't use our communicators.” McCoy then uses a hypospray to inject the transponder.

And if injected nanocommunicators are already a part of Star

Trek,

why does Geordi need his visor to see? Surely, he'd have microscopic sensors implanted in his eyes. In “Future Imperfect”

(TNG), Geordi wears “cloned implants” rather than his visor. In the movie,

First Contact

, Geordi's eyes are totally cybernetic (and quite handsome). Whatever their form, Geordi's visual implants show that the problem of translating an electronic signal into a neural one has been solvedâand if we can translate in one direction, we can do the reverse.

Trek,

why does Geordi need his visor to see? Surely, he'd have microscopic sensors implanted in his eyes. In “Future Imperfect”

(TNG), Geordi wears “cloned implants” rather than his visor. In the movie,

First Contact

, Geordi's eyes are totally cybernetic (and quite handsome). Whatever their form, Geordi's visual implants show that the problem of translating an electronic signal into a neural one has been solvedâand if we can translate in one direction, we can do the reverse.

But let's return to the ship's computer, as described by the

Technical Manual

.

Technical Manual

.

The LCARS polls every control panel on the ship at 30-millisecond intervals. All the control panels and terminals are hooked up to the ODN. These connections exist so the main processing core and/or quadritronic optical subprocessor (QOS) instantly knows all keyboard and speech commands issued on the ship.

First, it seems odd that the main processing core stores this information. The LCARS is defined as artificially intelligent. It should recognize and interpret voice commands as well as keystroke commands. In today's world, we don't need a huge mainframe computer to store and handle all our transmissions. We use the Internet, for example, and communicate directly from PC to PC. If Worf says “Where is Picard?” to his LCARS console, it should be able to query all the other LCARS consoles on the ship.

Supposedly, information travels between an LCARS console and the main processing core at FTL speed. Why is this necessary? Fingers don't type at FTL speed. People don't speak at FTL speed. Does the LCARS contain gigantic buffers to queue Worf's typed commands and spoken ideas, to store FTL-transmitted representations of entire galaxies for Worf to view on his screen? In today's world, electrons can course only so fast down circuits, no matter how close we jam the circuits together. That's why we're going to move from silicon circuitry to something else: maybe optical computers, maybe quantum computers, maybe some combination of approaches. So how does the LCARS

screen keep up with the FTL-speed drawings and three-dimensional renderings? What kind of graphics cards are in those

Trek

consoles anyway?

The Processorscreen keep up with the FTL-speed drawings and three-dimensional renderings? What kind of graphics cards are in those

Trek

consoles anyway?

T

he

Technical Manual

tells us that the main computer system is “responsible in some way for the operation of virtually every other system of the vehicle.” What does this mean?

he

Technical Manual

tells us that the main computer system is “responsible in some way for the operation of virtually every other system of the vehicle.” What does this mean?

To be blunt: The main computer system is a gigantic 1970s mainframe. Without it, nothing on the ship works. Even the way it's describedâ“The computer is directly analogous to the autonomic nervous system of a living being” and “The heart of the main computer system is a set of three redundant main processing cores”âreminds us of the way technical writers in the late 1970s described computers. By the mid-1980s, the use of “nervous system” and “heart” by technical writers was passé.

The CPU, or central processing unit, is called the computer's heart because it controls major system functions. Without a heart, you die. Without a CPU, the computer dies. The nervous system analogy refers to the networking, the cables, the wires, and the flow of electrons: just as in our bodies, signals move through the nerves by means of membrane potentials and neurotransmitters.

These analogies don't work very well any more. For one thing, our computer systems are more like a web of interconnected bodies and brains rather than a single being with a heart and a nervous system. A 1990s computer is tied to many different networks. Smaller local area networks (LANs) may feed directly onto larger company intranets, which may in turn tie directly into the global Internet. University networks hook to one another

and also hook to the Internet. As do government agency networks. There is no central heart, no central nervous system.

and also hook to the Internet. As do government agency networks. There is no central heart, no central nervous system.

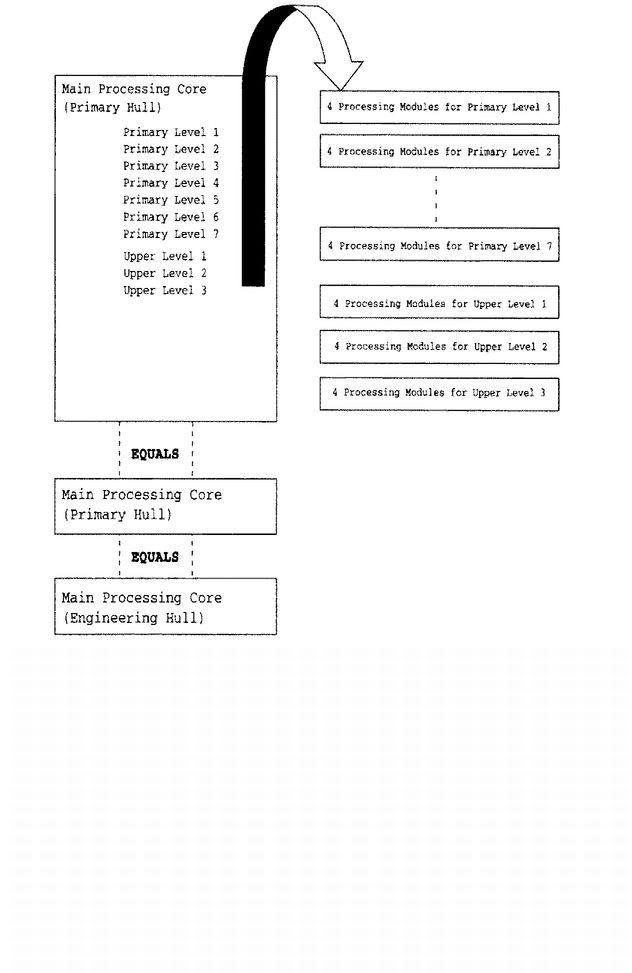

We have no clue how the primary and upper levels shown in

Figure 2.2

differ. The

Technical Manual

states only that each main processing core “comprises seven primary and three upper levels, each level containing an average of four modules.” It appears that the main computer system of the

Enterprise

has an architecture much like a massive parallel-processing supercomputer.

Figure 2.2

differ. The

Technical Manual

states only that each main processing core “comprises seven primary and three upper levels, each level containing an average of four modules.” It appears that the main computer system of the

Enterprise

has an architecture much like a massive parallel-processing supercomputer.

According to the textbook,

Computer Architecture: A Quantitative Approach

2

a processor is “the core of the computer and contains everything except the memory, input, and output. The processor is further divided into computation and control.” Processing performance is often measured as clock cycles per instruction or clock cycle time, with the clock synchronizing propagation of signals throughout the computer. Processing speed is commonly defined as operations per second. In 1984, one of the authors thought it was cool to be part of a team that created a superminicomputer that processed ten million operations per second. Big deal. In June of 1997, Intel built a supercomputer that executed 1.34 trillion operations per second. This computer looked like the

Enterprise

mainframe. Engineers had to crawl through Jeffries tubes (or their Earth-based equivalent) to access 9,200 Pentium Pro processors in 86 system cabinets.

Computer Architecture: A Quantitative Approach

2

a processor is “the core of the computer and contains everything except the memory, input, and output. The processor is further divided into computation and control.” Processing performance is often measured as clock cycles per instruction or clock cycle time, with the clock synchronizing propagation of signals throughout the computer. Processing speed is commonly defined as operations per second. In 1984, one of the authors thought it was cool to be part of a team that created a superminicomputer that processed ten million operations per second. Big deal. In June of 1997, Intel built a supercomputer that executed 1.34 trillion operations per second. This computer looked like the

Enterprise

mainframe. Engineers had to crawl through Jeffries tubes (or their Earth-based equivalent) to access 9,200 Pentium Pro processors in 86 system cabinets.

As seen in

Figure 2.2

, the main computer system of the

Enterprise

consists of:

Figure 2.2

, the main computer system of the

Enterprise

consists of:

(10 levels) * (4 processing modules per level) = 40 processing modules per main processing core

That's not even close to the 9,200 processors in the 1997 Intel supercomputer. But as we'll see, each Trek processing module contains hundreds of thousands of nanoprocessors.

FIGURE 2.2

Main Computer System

Main Computer System

Other books

Accidentally Wolf by Erin R Flynn

A Private Hotel for Gentle Ladies by Ellen Cooney

Dream Shard by Mary Wine

The Count of Castelfino by Christina Hollis

Confabulario by Juan José Arreola

Las fábulas de Esopo by Esopo

Holding Up the Sky by Sandy Blackburn-Wright

Shadow Man by Grant, Cynthia D.

The Wisdom of the Radish by Lynda Browning